Alexa Champions is “a recognition program designed to honor the most engaged developers and contributors in the community”. In a previous post, we revealed that there were some very well rated posted hidden several hundred pages deep in the Alexa marketplace. Eric Olson, co-founder of 3PO-Labs and Alexa Champion, pointed out that all 3 of these skills were by Alexa Champions. It seems odd for skills from champion developers to be rendered essentially undiscoverable by the very company that features them. This raises the question of whether Alexa Champions have any objective advantage over regular developers. Last week, we looked at skills’ ratings. Today, we will look at Alexa Champion skill ranking.

Analysis

Below are results for 13 Alexa Champions. Our analysis did not include Invoked Apps because their wildly successful skills make them an extreme outlier. We excluded VoiceXP because their 36 skills makes it hard to match the developer with an industry average (there is only one other developer with 36 skills). We also excluded 9 developers for only having 1 or 2 skills. Mark Tucker’s skills are distributed across a few developers, so unfortunately, his were also excluded from the analysis.

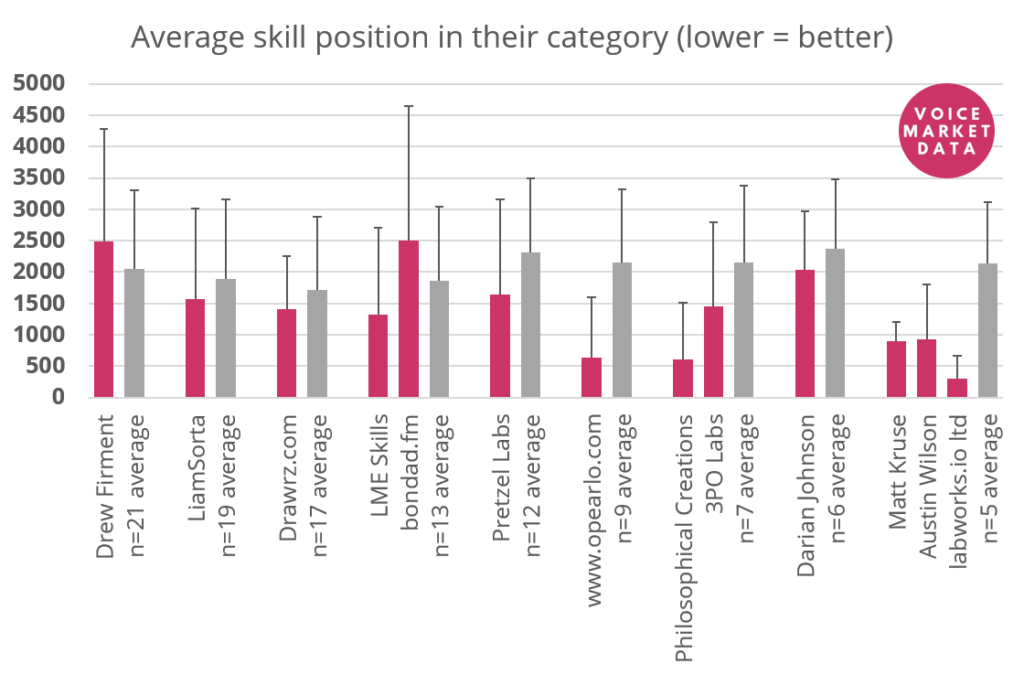

In the graphs, you will see the name of the Alexa Champion developer next to “n=# average”. This represents the average of all developers with “#” number of skills (same number that the Alexa Champion published). The thin black bars represent the variability of the average. If the bar is small, that means there was less variability and most data points were close to the average. A larger bar means that the data points were far from the average (in either direction).

Category position

The pink bar represents the Alexa Champions while the grey bar represents the average from all similarly prolific skills developers. The data presented in the graph below is the average position of a skill developed by that publisher. A lower average means that the skills are more likely to be in the first pages of a given category. There are 16 skills per page. The assumption is that people use the category pages to discover new skills, so it is be beneficial for a skill to be in one of the top pages. Amazon would presumably wants to promote quality skills on the first few pages of their marketplace to showcase good user experiences.

Here, we see that most, but not all, Alexa Champions have better than average mean skill position. The thin black bar, the variability, also tells us that while their average skill is better positioned than the average, several of their skills are below average. There are a few notable exceptions where the variability is very small (shout-out to labworks.io), but with most developers, some of their skills are buried behind thousands of other (probably lower quality) skills.

This is interesting because page rankings are entirely controlled by Amazon. The order in which skills are displayed is controlled by an algorithm, but priority could be given to skills by Amazon’s selected developers. Also, remember that these developers had on average better rated skills than their paired non-champion developers. This begs the questions, why doesn’t Amazon more heavily favour its Champions?

2 thoughts on “Deep dive into Alexa Champions: Ranking (November 2019)”